“We don’t have time to write tests!”

Atolye15 is a company that has been around since 2009. As a team that believes in continuous improvement, we have learned over time to do the things we used to do poorly in the proper way and the things we already did well in an even better way. Each day, we keep trying and learning new things. But we must admit, there may still be things that we don’t do quite well enough.

In fact, this process is a common problem for almost all companies and developers. We all laugh and have fun when we see jokes about previous developers. However, the point is some companies are at the beginning of improving their processes, and some are in the middle of it, but some don't even engage in such a process and have no intention of doing so. For developers, this surely leads to frustration.

In this article, I will talk about our own experiences, the common problems we have collected from the hundreds of job interviews we have done, and how we approach them. Before delving into these problems, it is worth noting that these are our own perspectives, completely subjective results, and they may not be right for another process or community.

If you’re ready, let’s get started!

We don’t have time to write tests

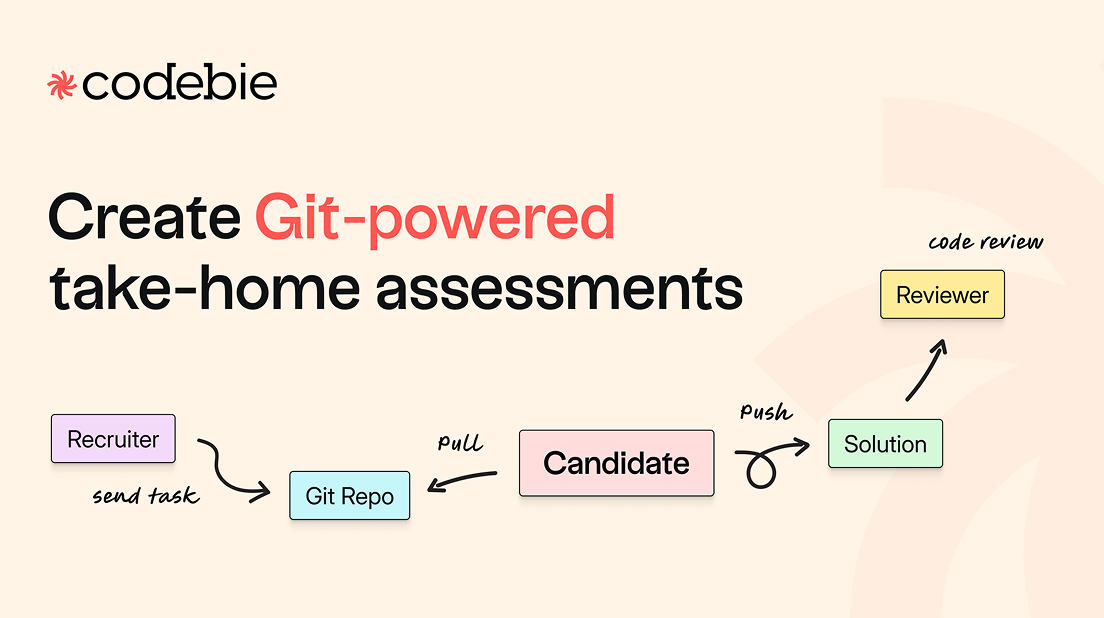

This is by far the most common sentence we hear in job interviews. Whenever a conversation about testing comes up, the first thing we usually hear is: "Actually, I want to write tests, but my company won't let me because it would take too much time. So, I write tests for my hobby projects."

For us, this way of thinking is not quite correct in two senses. Before going into more detail about the benefits of tests, it’s necessary to think about this; just as developers start their journey writing spaghetti code and then learn how to write more sustainable production code after learning from their mistakes, the same is true for tests. When thirty tests explode due to a change in the production code, we think that a developer should experience and learn over time that the tests are constructed incorrectly and that the focus is on the production code instead of the behavior.

Along with various types of tests, there are also many test methods available. In order to write sustainable tests, it is necessary to repeat this often.

The second topic we should discuss is the “We don't have time” issue which makes up the title of this article. It must be admitted that an increment developed with adequate test coverage takes more time than it would without testing. Of course, the time factor here depends on the testing experience of the team and whether the feature is well-defined or not. At this point, we end up experiencing a tradeoff.

- Quickly delivering an increment and manually testing it to ensure it works predictably and continuing to manually check for regressions in infinite time.

- Writing unit, integration, and E2E tests over a longer period of time to be absolutely sure that the feature works as well as the scenarios and to always be aware of the regressions that may occur in infinite time, and even minimize the possibility of regression. Here, of course, we need to mention the maintenance of test codes as a disadvantage.

When we look at this two-variable equation, we always opt for the second way. Because the tests that we don't write because we don't have time in the short term turn into more "We don't have time" excuses in the long term. Because we spend our time solving errors and adding new bugs to them.

We have a deadline to catch

The second most common complaint we hear in job interviews comes to us in different patterns. "We need to meet the deadline," "We are constantly adding features, so we cannot refactor," and "We need to finish somehow." can be listed as some of these patterns. Our intention is actually never to speak ill of deadlines. Because the deadline date usually has a logical reason behind it. Maybe there is an event to be attended, and the budget and all marketing plans were made according to that date, and so on. We can come up with endless justifiable reasons.

In our opinion, the action to be taken when there is a problem with the deadline is to reconsider our priorities instead of turning the issue into a labor problem like "Then let's increase our speed." It would be healthiest to make a release plan again together with everyone involved in the process by answering questions such as “What are the things that we can do by this date that absolutely must happen?”, “What can we put off for later?”, “ Can this feature really work without these three scenarios?”

In addition to all this, we think it is always better to set a few small milestones that need to be achieved in order to reach that deadline, rather than a single deadline. These smaller goals will give you a better indication of how far you are from the main goal and will allow you to constantly review your timeline. We've also found that for team motivation, it's always better to have smaller goals that are closer than a distant goal.

Compromising code quality to meet deadlines is perhaps the most common action taken within this title. But, unfortunately, this has a few negative consequences.

- Every test we don't write comes back to us as regression, and we add new bugs to our system with every new feature. This is not to say that there are no bugs in projects that are progressing properly. Here, we are only talking about bugs that can be easily prevented.

- Every code written without thinking about the future actually creates more deadline pressure. Because adding a new line of code to every piece of code that is not properly planned becomes more and more challenging as time passes. So, in order to get rid of the initial time pressure, we create exponentially increasing deadline pressure.

- Every time we don't refactor, it creates teams that are afraid to refactor. Over time, there are so many places that need to be updated that no one wants to take on this responsibility. So, at the end of the day, we end up with the following topic: “We need to rewrite this.” And this means a new deadline in itself.

We need to rewrite this

We have to admit that we have said this a few times ourselves. In fact, as Atolye15, we don't claim that we have always been the best. We have made many mistakes and experienced the consequences of these mistakes. Frankly speaking, I think the desire to do things well usually emerges as a result of the lessons learned from the poor processes we have been through or heard from others.

"We need to rewrite this" is a result. Of course, it may not always be the right statement, but let's assume it is. Then perhaps we need to focus on the causes. We can analyze these reasons under a few sub-headings.

Unwritten tests

We must admit that each code block will eventually become legacy code. The important thing is to be able to constantly refactor our codebase. This requires two things: first, a team with the courage and willingness to refactor, and second, reliable tests that can give this courage. In teams where refactoring is a risky deed, people become hesitant to do it voluntarily over time. Because if there is a problem in the scope they refactor, it becomes their responsibility, and they find themselves saying, "I wish I had never touched it." At some point, people just handle their own tasks and let the code they think is behaving badly still exist in the codebase while continuing to use it.

As time passes, it becomes torture to add anything new, let alone refactor. And one day, not wanting to endure this torture any longer, the team starts to cling to the phrase, "We need to rewrite this." But as I said at the beginning, this is a result. So, if this team really rewrites without changing the causes of this result, they will probably find themselves saying, "We need to rewrite this" one more time.

Every team that trusts its tests finds itself constantly refactoring. Because it is clear what the changes they make cause. As long as the tests remain green, the process can be built again and again as desired.

Tests written incorrectly

Although we prefer poorly written tests to tests that are not written at all, if code changes that do not alter the behavior cause a large number of tests to fail, we can talk about the possibility of incorrectly written tests. Tests that are too code-oriented check "Did I write the code in my head correctly?" rather than "Does the system work correctly?" Therefore, when we enter the refactoring process and a different way of writing code is formed in our heads, our tests start to fail. In codebases where this process is constantly occurring, it is only a matter of time before the tests become idle and cease to be a source of accuracy.

Codes written as if there is no tomorrow

Teams that are constantly adding features may find themselves constantly trying to catch up. After a while, this may cause symptoms such as unresponsiveness in people. In other words, the person focuses only on getting the job done rather than the quality of the work. As you can imagine, this creates an inefficient process.

We can add time pressure to this heading, but again the results will not be very different. I've tried to explain how we combat time pressure in the topics above, but we can say a few things about teams that only focus on adding new features.

The evolution of applications over time, perhaps their pivoting, and the revision of revisions are undeniable processes. The product we develop is a living entity, and therefore its target audience, the problem it tries to solve, or the way it solves the problem also changes over time. We cannot object to this. However, the thing we should keep in mind is; that we should devote some of our time to improving our existing codebase or features. Because when the team realizes that something is wrong, they will inevitably get stuck there, and when they are asked to add something new instead of fixing it, they will not be very enthusiastic about it. If this process becomes continuous, the boredom phase that we mentioned may set in, and the team may become robotic.

Wrapping up

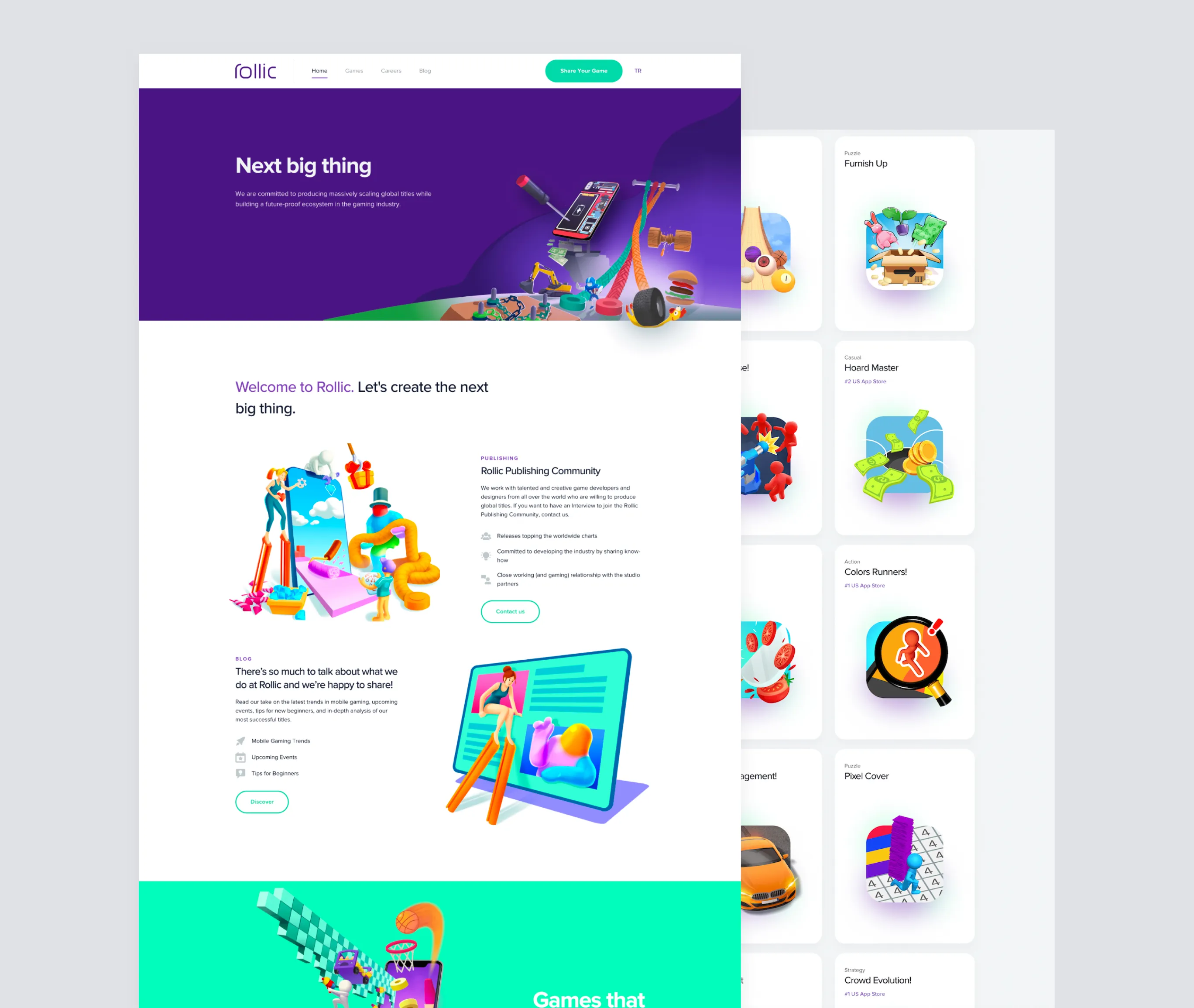

There are many more topics that we all know, and you can all guess. We have experienced some of them first-hand over time, some of them from others, and we are generally aware of these problems. We are trying to solve them and improve our processes as much as we can. If you want to contribute to this, you can apply to be part of our team on our Careers page.